There's a land grab happening in AI search, and most marketers are buying the wrong parcels.

Since ChatGPT went mainstream, a new industry has emerged selling "AI SEO" as if it's a completely separate discipline from what you were already doing. The playbooks are full of tactics: add an llms.txt file to your site, restructure headings as questions, include "key takeaways" at the top of every article, implement AI-specific schema markup, get your brand onto Reddit and Wikipedia because those are where LLMs pull their data.

Here's the problem: most of these tactics don't work. The agencies selling them have something new to add to their retainers. Marketing teams are burning budget implementing changes that studies show have no measurable effect on AI visibility.

Meanwhile, the brands quietly appearing in ChatGPT and Perplexity responses for their most important keywords are doing something fundamentally different — and mostly un-glamorous. They're doubling down on the content and SEO fundamentals that have always mattered. They're showing up in web searches that AI tools perform to generate their answers. They're getting mentioned on the industry sites those AI tools actually cite.

This isn't a contrarian take. It's what the data shows.

In a Nutshell

- AI tools like ChatGPT and Perplexity search the web when users ask product-related questions. They rely on third-party search engines (Bing, Google) to ground their responses in current information. If you rank in traditional search, you get exposed to AI.

- The correlation between Google rankings and AI mentions is striking: when a site ranks on Google's first page for a keyword, it appears in ChatGPT and Perplexity responses 77% of the time. That jumps to 82% for top-three positions.

- Top-of-funnel content gets zero AI visibility in the sense that matters — LLMs answer informational queries directly without mentioning brands. Bottom-of-funnel content (product comparisons, best-of lists, use-case queries) is where brands get cited.

- Off-site brand mentions on industry-specific sites drive AI citations 86% of the time vs. 16% for generic sites like Reddit — meaning "get on Reddit for AI SEO" is mostly wrong.

- llms.txt, question-formatted headings, FAQ schema: tested extensively, no measurable impact. Not worth prioritizing over the fundamentals.

- The attribution model for AI-influenced conversions is broken by default. Someone finds you through ChatGPT, then Googles your brand name. That conversion shows up as branded organic or direct — not AI. You need "how did you hear about us" data to see the real picture.

Table of Contents

- How AI Search Actually Works

- Why Most AI SEO Advice Is Wrong

- The GEO Priorities Framework: A 3-Tier Model

- Tier 1: Bottom-of-Funnel Content That Ranks

- Tier 2: Off-Site Brand Mentions on the Right Sites

- Tier 3: On-Site Tactics (Last Priority)

- The Attribution Problem: Measuring AI Visibility

- What This Means for Your 2026 Content Strategy

- The Bottom Line

- Frequently Asked Questions

How AI Search Actually Works

Before you can optimize for AI search, you need to understand the mechanism — and it's simpler than the AI SEO industry would have you believe.

When someone asks ChatGPT "what's the best project management software for remote teams" or "top performance marketing agencies in the US," the AI doesn't answer from its training data alone. It searches the web.

ChatGPT's search feature uses third-party search engines including Bing and Google. Perplexity's CEO has publicly acknowledged their reliance on third-party web crawlers in addition to their own indexing. When a user asks a product-related question, these AI tools search the web, look at what's ranking, synthesize the information they find, and deliver a recommendation — in their own words — to the user.

This is the mechanism that explains everything about AI SEO:

If your content ranks well in traditional search for queries where users are evaluating products, you will be found by the LLMs that answer those queries.

That's it. No black magic. No AI-specific metadata. The same factors that help you rank in Google — domain authority, backlink profile, content quality and depth, topical relevance — are the factors that determine whether AI tools surface your brand.

Research analyzing 400+ bottom-of-funnel keywords across 16 clients found a consistent correlation: when those clients ranked on Google's first page for a keyword, they showed up in ChatGPT and Perplexity responses for the same keyword 77% of the time. Top-three positions pushed that correlation to 82%.

The implication is significant: your AI SEO strategy is, in large part, your SEO strategy. The fundamentals haven't changed. The stakes have gotten higher.

Why Most AI SEO Advice Is Wrong

The tactics getting the most attention in the AI SEO conversation are the ones that sound new and AI-specific. That's a problem, because most of them have no evidence behind them.

llms.txt Files

The llms.txt standard was proposed by Jeremy Howard (founder of fast.ai) as a file brands could add to their website to help AI crawlers understand their content — similar to how robots.txt guides search engine crawlers. The idea sounds logical.

The problem: there's no evidence that OpenAI, Anthropic, or other major LLM providers actually use it. Tests run across multiple clients — sites with and without llms.txt — show no measurable difference in AI visibility. Howard proposed it as a good idea for the industry to adopt. That's not the same as the industry adopting it.

Question-Formatted Headings

The advice: rewrite your H2s and H3s as questions instead of statements, because LLMs supposedly process Q&A formats better.

The problem: LLMs are built specifically to understand natural language the way humans do. They don't need content reformatted in a specific way. If your content is clear, specific, and useful in declarative form, the AI understands it perfectly. Rewriting headings as questions for AI's benefit is adding complexity without adding value.

FAQ Sections and Key Takeaways

Same category. The logic is that structured extraction helpers make it easier for AI to synthesize your content. The reality: sites without FAQ sections and key takeaways show up in AI responses all the time. These formatting elements may be useful for human readers — they're just not a significant lever for AI visibility.

"Get on Reddit and Wikipedia"

Infographics showing which domains AI tools cite most frequently circulate constantly online, usually showing Reddit, Wikipedia, Forbes, and similar high-authority general sites at the top.

The inference — that you should target these platforms for AI visibility — is based on a fundamental misunderstanding of how LLMs source responses.

The problem with this advice is it conflates two different things: overall citation volume and product-specific citation behavior.

Yes, Reddit ranks extensively on Google for discussion and informational queries. And yes, AI tools do cite Reddit — heavily, for those types of questions. But when the query shifts to product evaluation ("best dispatch software for trucking companies"), the citation behavior changes entirely.

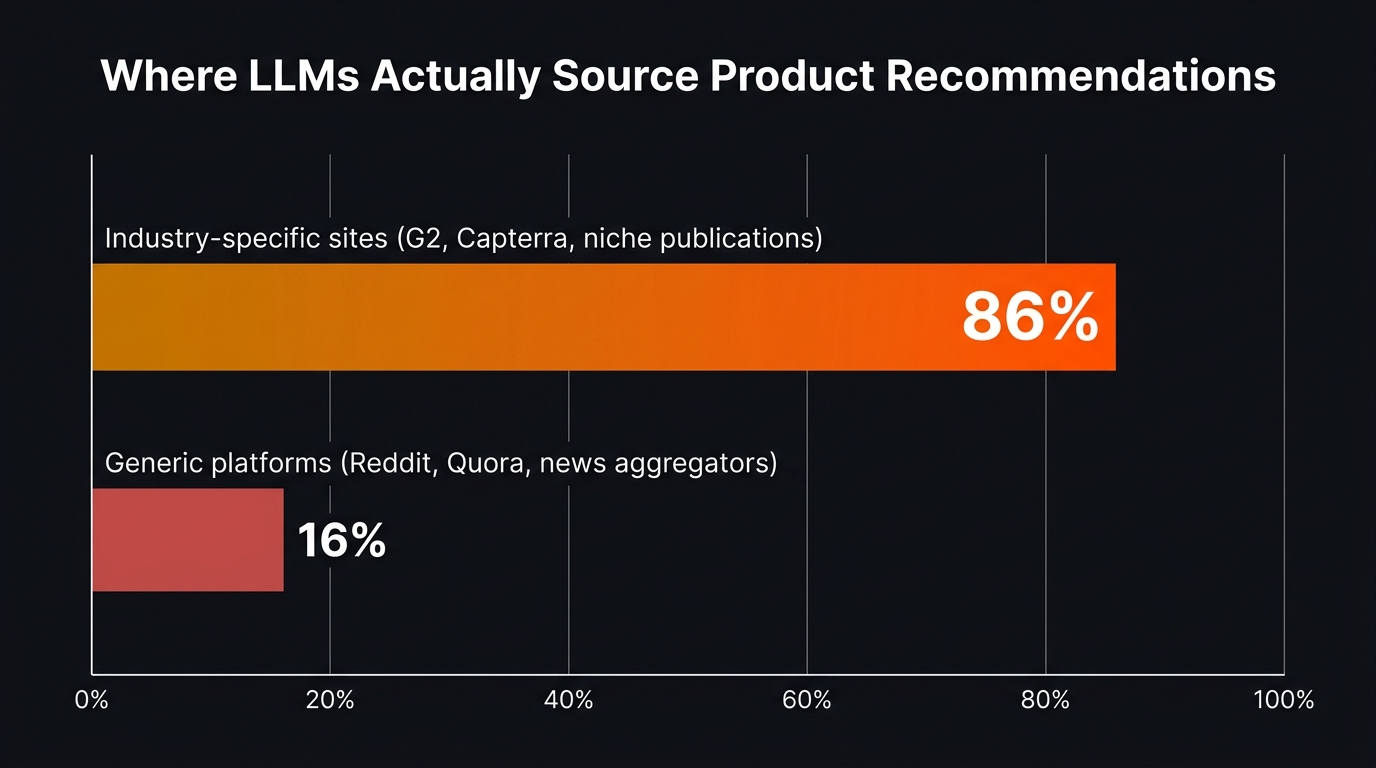

For product-specific queries — where brand citations actually matter — LLMs cite industry-specific review sites, comparison pages, and category publications 86% of the time, and generic platforms like Reddit only 16% of the time. The AI is searching the web for what's most relevant to that specific query, not pulling from a universal "most-cited domains" list.

A Reddit presence is fine for brand awareness and discussion-query visibility. It's not a reliable strategy for the product recommendation queries where your brand actually needs to show up.

Getting mentioned on Reddit is fine for brand awareness. It's not a reliable strategy for AI product visibility.

The GEO Priorities Framework: A 3-Tier Model

Given what we know about how AI search actually works, a rational strategy organizes tactics by impact — not by how new or AI-specific they sound.

The framework that makes most sense is what researchers at Grow & Convert have called the Prioritized GEO pyramid:

The pyramid inverts the priority order of most AI SEO advice. Most agencies are selling Tier 3 tactics (easy to implement, sounds AI-specific) while underinvesting in Tier 1 and Tier 2, which are where AI visibility actually comes from.

Tier 1: Bottom-of-Funnel Content That Ranks

The foundation of AI visibility is traditional SEO — specifically, content targeting queries where users are evaluating or shopping for products like yours.

This distinction matters enormously.

Top-of-Funnel Content Gets No AI Brand Visibility

Consider a typical informational query: "what is performance marketing?" In traditional SEO, this was worth targeting for traffic volume alone. But ask ChatGPT the same question and it answers directly — no brand recommendations, no citations, no links. The AI has absorbed enough about the definition of performance marketing that it can answer without consulting web sources.

The same pattern repeats across virtually all educational, definitional, and how-to content: LLMs answer it directly and don't recommend brands. They don't need to. The user isn't asking for product recommendations — they're asking for information, and AI can provide that.

This means top-of-funnel content is increasingly a zero-return investment for brand visibility in AI search. It may still drive some Google traffic (AI Overviews don't eliminate all organic results), but it drives essentially no AI brand citations.

Bottom-of-Funnel Content Generates AI Recommendations

The behavior is different when someone asks a product-specific question: "best performance marketing agencies for D2C brands" or "alternatives to [competitor name] for SaaS companies." The AI can't answer these from training data alone — it needs current web information about what options exist and how they compare.

So it searches the web. It looks at what's ranking. It synthesizes those results into a recommendation. And if your content ranks for those queries, you're in the synthesis.

The types of bottom-of-funnel queries worth targeting:

Category keywords: "best [category] [software/agency/tool] for [use case]" — people actively evaluating options in your space.

Comparison keywords: "[competitor] alternatives" or "[brand] vs [competitor]" — people close to a purchase decision who are comparing options.

Jobs-to-be-done keywords: "how to [specific outcome your product helps achieve]" — content that demonstrates your solution while addressing the problem. Best when the content shows the manual method and positions your product as the better path.

Use case keywords: "[your category] for [specific industry or company type]" — narrowing by vertical, company size, or use case creates entry points your generic competitors aren't targeting.

Write Content That Teaches AI to Recommend You

This is the part most brands miss: how you write your content determines how AI describes you.

When an LLM reads your content and then describes your business to a user, it's synthesizing your own words. It's essentially reading your copy and then pitching you to a potential customer. If your content is generic — "a full-service marketing agency delivering results-driven campaigns" — that's how AI will describe you: generically, indistinguishably.

The content that generates strong AI recommendations is content with specificity:

- Who you work with (industries, company types, revenue range, use cases)

- What specific problems you solve (concrete pain points, not vague benefits)

- How you're different from alternatives (not "we're better" — specific differentiators with proof)

- Results with context (not just "300% ROAS increase" — who achieved it, how long it took, what their starting conditions were)

AI-generated content performs worst for AI SEO — and the reason is structural. LLMs produce content that reflects the average of their training data: grammatically correct, broadly applicable, and completely generic. When another LLM reads that content and tries to describe your brand, it has nothing specific to synthesize. You get generic descriptions of a generic-sounding brand. Human-authored content with specific insights, first-party data, and distinct positioning gives AI something to work with.

Tier 2: Off-Site Brand Mentions on the Right Sites

Once your owned content is creating AI visibility through traditional rankings, off-site mentions amplify that signal by getting your brand surfaced through additional sources.

The mechanism is the same as Tier 1: LLMs search the web and cite what they find. If authoritative industry sites mention your brand in the context of queries you care about, those mentions become additional pathways to AI citation.

Which Sites LLMs Actually Cite

Here's where the common advice breaks down: it's not about getting onto high-authority generic sites. It's about getting onto the sites that rank for your specific target queries.

Data from Profound's analysis of 680 million AI citations across all three major platforms reveals the actual citation landscape:

- Wikipedia accounts for 7.8% of overall citations — but that drops below 3% on AI Mode and under 1% on Perplexity for product queries

- G2 (1.1%) and Gartner (0.7–1.0%) appear consistently for B2B software queries across all platforms

- NerdWallet, BusinessInsider, and Forbes appear at 0.6–1.1% overall — but their share rises significantly for financial and SaaS-adjacent queries

- LinkedIn is cited in nearly 15% of Google AI Mode responses — significantly higher than on ChatGPT or Perplexity

The key insight: citation share is query-type-specific. When someone asks ChatGPT for the best trucking dispatch software, the AI surfaces trucking-specific software review sites, not Wikipedia or general news sites. For financial tools, NerdWallet dominates. For B2B software, G2 and Capterra are consistently cited.

Research on product-related queries specifically found that industry-specific review sites, comparison platforms, and category publications get cited 86% of the time — while generic platforms like Reddit, Quora, and news aggregators get cited only 16% of the time.

The takeaway: your citation targets should be derived from your specific target queries, not from a generic "high authority = good" framework.

How to Identify Which Sites to Target

The process is straightforward:

- Take the bottom-of-funnel keywords from your Tier 1 strategy

- Enter those queries into ChatGPT, Perplexity, and Google AI Overview

- Note which sources get cited in the responses

- Those are the sites you need to be mentioned on

This gives you a prioritized, query-specific target list rather than a generic "get on Forbes and G2" checklist.

Citation Outreach Tactics

Once you know which sites LLMs are citing for your target queries, the outreach goal is to get mentioned on those sites in relevant contexts:

Listicle inclusion: Many "best [category] tools" articles get updated regularly. Reaching out to the authors or editors of these pages — with a clear positioning statement and proof of results — can get you added to lists that LLMs use as source material.

Expert contributions: Industry publications frequently accept expert commentary and contributed insights. Being quoted in an article that ranks for a comparison query puts your brand in the AI's citation pool for that query.

Guest content: Writing original research or case studies for relevant industry publications gives you a longer-format mention that helps LLMs understand your specific expertise and positioning.

Review platform presence: On G2, Capterra, Trustpilot, and category-specific review sites, a strong and current presence matters both for traditional SEO and AI citations. These platforms rank well for comparison and alternative queries.

Tier 3: On-Site Tactics (Last Priority)

This is the tier where most AI SEO advice lives — and the tier where impact is lowest.

On-site tactics are designed to help LLMs better understand and extract information from your content. The premise is sound in theory: if you structure content in ways that AI can more easily digest, AI will more accurately represent you.

In practice, the evidence for most of these tactics is thin at best.

llms.txt: No measurable impact in testing. Not adopted by major AI providers as a standard. Worth implementing in ten minutes if you want to experiment, but not worth allocating meaningful resources to.

Structured headings as questions: LLMs handle natural language declarative statements and question formats with equal proficiency. Rewriting all your headings as questions has no documented effect on AI visibility and may hurt human readability.

FAQ sections and key takeaways: These are good for human readers and may marginally help with traditional featured snippets. No clear evidence they improve AI citation rates specifically.

Schema markup for AI: Structured data does help search engines understand content, and it's worth implementing for traditional SEO reasons. But there's no demonstrated benefit specific to AI visibility beyond what traditional schema already provides.

The right framing for Tier 3: implement these tactics after you've invested in Tiers 1 and 2, not instead of them. If your content isn't ranking in traditional search, making it easier for AI to parse won't help — the AI can't find it in the first place.

The Attribution Problem: Measuring AI Visibility

This is where marketers run into a wall that most AI SEO guides don't address honestly: traditional attribution is broken for AI-influenced conversions.

Here's what typically happens:

A potential customer asks ChatGPT for recommendations in your category. ChatGPT mentions your brand. The user opens a new tab, searches your company name on Google, and lands on your homepage. They fill out a contact form two days later.

In your analytics, that conversion shows up as branded organic search, or possibly direct. The AI mention that drove the entire journey is invisible. If you're judging AI SEO success by tracking AI-referral conversions in GA4, you're seeing a fraction of the actual picture.

What You Can Actually Measure

"How did you hear about us?" — Add this field to every lead form, demo request, and sales intake form. Make it open-text, not a dropdown. You'll start seeing explicit mentions of ChatGPT, Perplexity, and "I found you through AI" that never appeared in your analytics before.

AI referral traffic: ChatGPT, Perplexity, and Claude do send some directly trackable referral traffic. Create a custom segment in GA4 for sessions from AI platform referral sources. This won't capture all AI-influenced conversions, but it gives you a baseline.

Branded organic search growth: If your AI visibility strategy is working, you should see branded search volume increase as more people who encounter your brand through AI later Google your name to learn more. This is a lagging indicator, but a reliable one.

Direct AI visibility tracking: Purpose-built tools now exist specifically for this. Profound tracks brand mentions across ChatGPT, Perplexity, and Google AI Mode at scale — mapping which prompts surface your brand, how frequently, and with what sentiment. Other options include BrandMentions and manual weekly tracking using target queries. At small scale, manual tracking gives you the signal; at larger scale, purpose-built tools give you the pattern.

Overall organic trajectory: Rather than trying to attribute every conversion to a specific channel, track organic traffic and conversion rates holistically. If your rankings are growing, your AI visibility is likely growing, and your organic conversions should reflect that over time.

The brands succeeding with AI SEO aren't waiting for perfect attribution before declaring it valuable. They ask prospects directly, track what's trackable, monitor AI visibility manually for their top keywords, and look at the overall organic growth trajectory. AI-influenced conversions are real — they're just harder to measure than last-click models suggest.

What This Means for Your 2026 Content Strategy

If you take the GEO Priorities framework seriously, it reshapes several decisions that most marketing teams are currently getting wrong.

Deprioritize top-of-funnel content creation. If you're publishing educational content on broad informational topics primarily to capture organic traffic, AI Overviews are already eating into those click rates — and AI search generates no brand visibility from informational queries anyway. Redirect that resource budget toward bottom-of-funnel content that actually earns citations.

Be more specific, not more prolific. The brands that get recommended by AI tools have content that clearly articulates who they serve, what they do, and why they're different. Publishing more generic content faster doesn't help. Publishing fewer, more specific, more authoritative pieces does.

Build a citation outreach function. This is new for most marketing teams. Someone needs to own the process of identifying which sites LLMs cite for your target queries and systematically working to get your brand mentioned there. This looks like digital PR but with a specific methodology behind which publications to target.

Connect your content strategy, SEO, and answer engine optimization efforts explicitly. The fragmentation between "SEO content" and "brand storytelling" hurts AI visibility. Every piece of content you publish should serve both ranking and brand communication goals — ranking to get in front of AI's web searches, communication specificity to teach AI how to describe you.

Stop trying to game AI's input and start earning AI's outputs. The brands chasing llms.txt and question-formatted headings are trying to influence how AI processes content. The brands building real AI visibility are focused on earning the underlying rankings and mentions that determine what content AI processes in the first place.

The Bottom Line

The AI SEO gold rush has produced a lot of tactics in search of evidence. Most of the advice circulating online is either unproven or actively misdirected — focused on technical optimizations that have no measurable impact when the fundamentals haven't been established.

The brands appearing consistently in ChatGPT and Perplexity responses are the ones doing traditional content and SEO well, with one important adjustment: they're targeting bottom-of-funnel content specifically, because that's the only content type where AI search generates brand citations.

The framework is three tiers, prioritized in order:

First, own your rankings for product-related, buyer-intent queries. Second, get mentioned on the industry-specific sites that LLMs actually cite when those queries are asked. Third — and only after the first two are in motion — experiment with on-site AI optimizations.

This isn't glamorous. It's not a new tool or a proprietary AI-specific methodology. But it's what works, and the gap between brands that understand this and brands chasing shiny tactics is only going to widen as AI search becomes more central to how buyers discover solutions.

If you're working on AI marketing strategy or want a structured approach to content and search visibility that's built for both Google and the LLMs that depend on it, the fundamentals haven't changed — but the stakes for getting them right have.

Frequently Asked Questions

Does an llms.txt file help you appear in ChatGPT or Perplexity?

Based on testing to date, no — not measurably. The llms.txt standard was proposed by Jeremy Howard (fast.ai founder) as a useful convention for AI crawlers. However, OpenAI, Anthropic, and other major LLM providers haven't announced adoption of it as a standard they follow. Testing across sites with and without llms.txt shows no consistent difference in AI visibility. It takes about ten minutes to implement and doesn't hurt anything, but it shouldn't be prioritized over content ranking and off-site mention strategies.

What types of content get your brand recommended by ChatGPT?

Bottom-of-funnel content targeting product evaluation queries. Specifically: category keywords ("best [category] software for [use case]"), comparison keywords ("[competitor] alternatives"), jobs-to-be-done keywords (how to accomplish a specific outcome your product enables), and use-case keywords. Informational and educational content — "what is X," "how does X work" — generates essentially no brand citations because LLMs answer those directly without recommending products.

How do LLMs like ChatGPT decide which brands to recommend?

For product-related queries, LLMs search the web using third-party search engines (ChatGPT uses Bing and Google, Perplexity uses its own crawler plus third-party sources). They look at what's ranking for the query, read those pages, and synthesize the information into a response. Brands that rank well in traditional search for product-related queries are the ones that appear in AI-generated recommendations. Domain authority and the quality/specificity of your content both influence how favorably AI describes you.

Is AI SEO different from traditional SEO?

The foundation is the same — traditional search rankings drive AI visibility. The key adjustment is content strategy: bottom-of-funnel, buyer-intent content becomes even more important in AI search because top-of-funnel informational content generates no brand citations in AI responses. Additionally, how you write content matters more: AI synthesizes your content to describe your brand to users, so specificity about who you serve, what you solve, and why you're different directly shapes how AI portrays you. This is a new dimension to content quality that traditional SEO didn't emphasize.

Should you try to get on Reddit for AI SEO purposes?

Not as a primary strategy. Data on which sources LLMs cite for product-related queries shows industry-specific sites get cited 86% of the time vs. 16% for generic sites like Reddit. Reddit is cited most often for general discussions or opinion-based questions — not for specific product recommendations, which is where brand citations matter. Focus citation outreach on industry review sites, comparison platforms, and niche publications that rank for your specific target queries. That's where LLMs actually look when recommending products in your category.

How do you measure AI search visibility if traditional analytics can't track it?

Use a combination of approaches: (1) Add "how did you hear about us?" as an open-text field on lead forms and sales calls to capture self-reported AI attribution. (2) Track referral traffic from AI platforms (ChatGPT, Perplexity, Claude) as a separate segment in GA4. (3) Monitor branded search volume growth — AI mentions drive people to search your brand name directly. (4) Manually enter your target queries into major AI tools weekly and track whether your brand appears in responses. (5) Track overall organic conversion trajectory rather than trying to attribute every lead to a specific touchpoint.

What's the most important thing to fix first to improve LLM visibility?

Content ranking for bottom-of-funnel queries. Everything else — off-site mentions, on-site optimizations — amplifies your existing visibility. If your site doesn't rank in traditional search for the product-evaluation queries in your category, AI tools won't find your content when they search the web to answer those questions. Start by auditing which buyer-intent keywords you rank for (or don't), then build or improve content targeting those specific queries. That's the lever with the highest impact on AI visibility.